Brief: What would data collection devices that collected data on fear look like? And how could you intervene in such data collection systems?

The brief for this project came out of my thesis work, where I was researching the more technical aspects of machine learning as well as it’s impacts, specifically through predictive policing. For more on how this work fits into the overall thesis work, see the process case study.

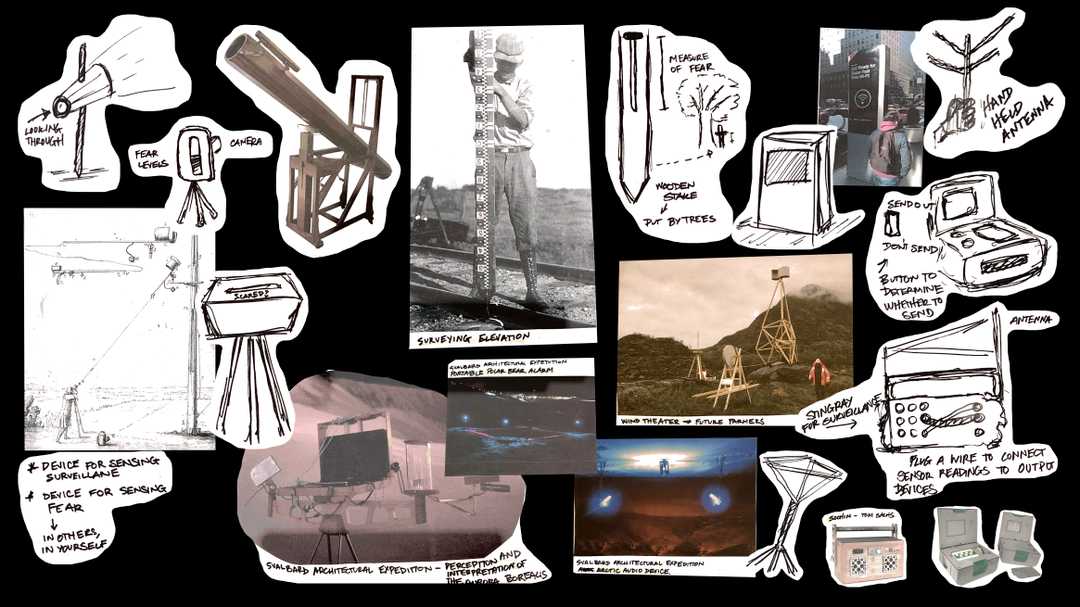

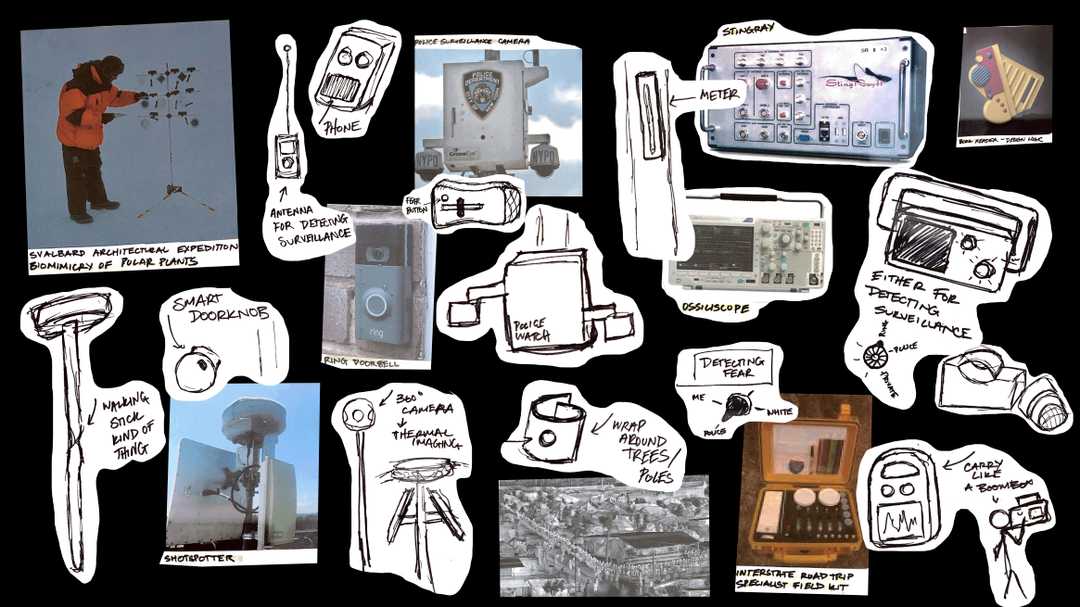

Secondary Research

The work started by looking at the form and function of existing data collection devices, with an additional focus on surveillance technologies. While doing this research, I was also sketching out concepts for speculative devices.

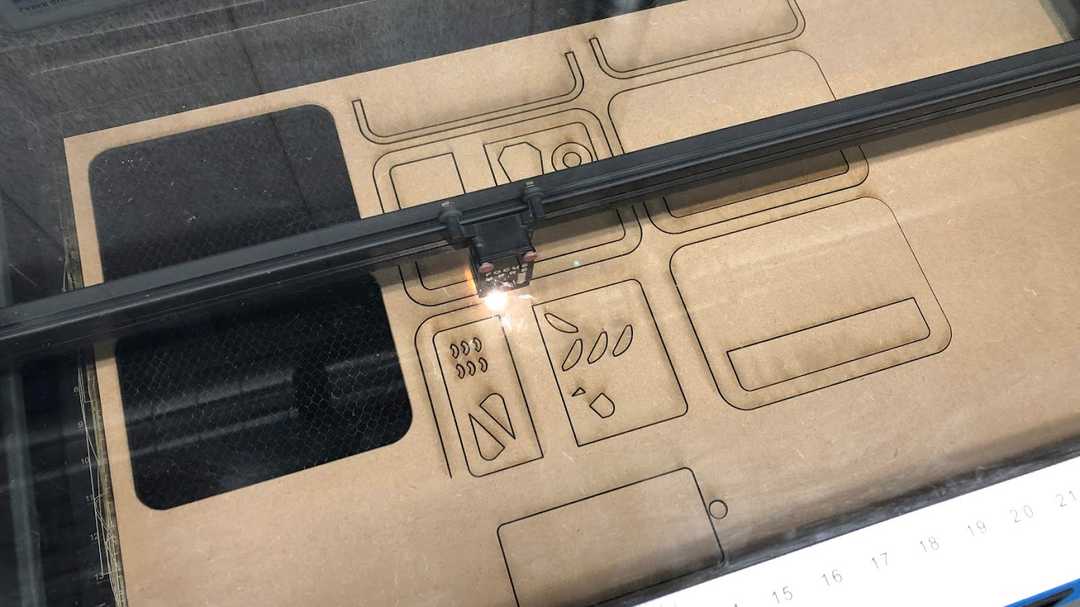

Prototyping

Using cardboard and simple electronics, I began to prototype the shape and feel of different data collection devices. Using these early prototypes, I began to develop rough stories of the context in which these devices might be used, which in turn, influenced the design of the devices.

Developing the Story

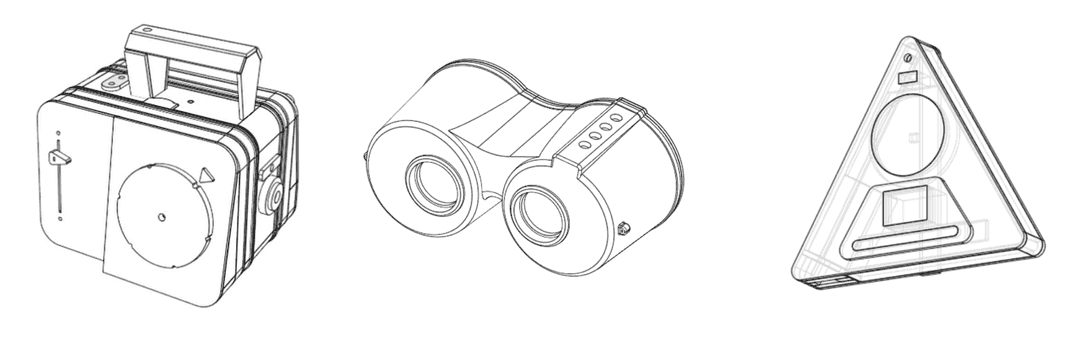

As I continued to refine the data collection devices, I also continued to refine the stories that would go along with the physical artifact. In some cases, if the story did not fit thematically with the rest of the artifacts, then I had to leave it behind.

The two final scenarios each looked at data collection and safety from two different scales: a municipal scale and a corporate one.

Scenario 1: Corporate Data Collection

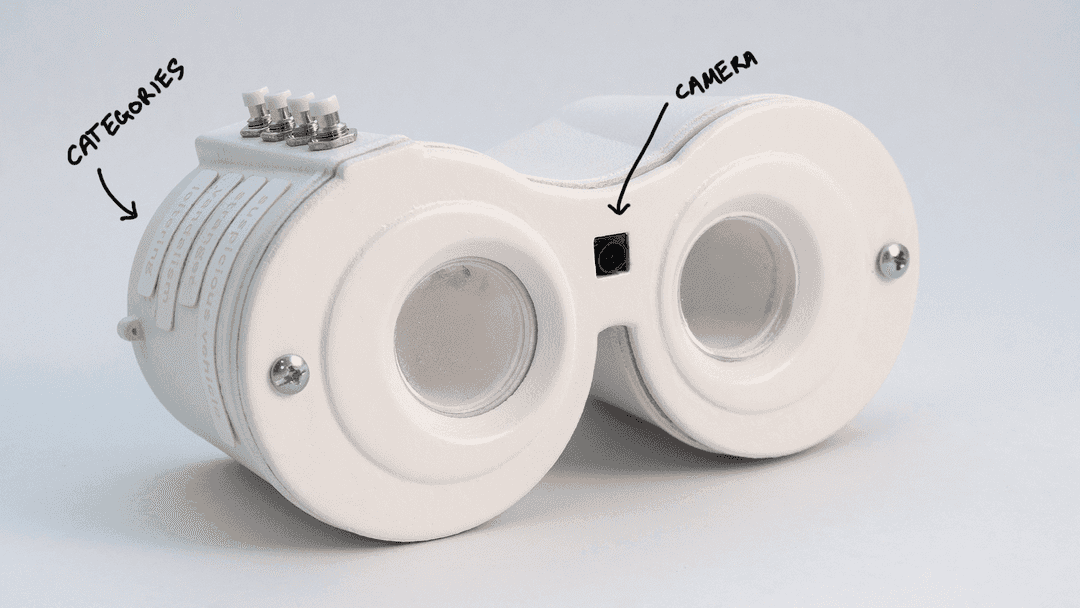

The first speculative scenario is an extrapolation of current consumer surveillance tools like the Ring doorbell. This scenario imagines a major tech company creating a machine learning product that allows users to collect images to train personalized image classification algorithms to their neighborhood.

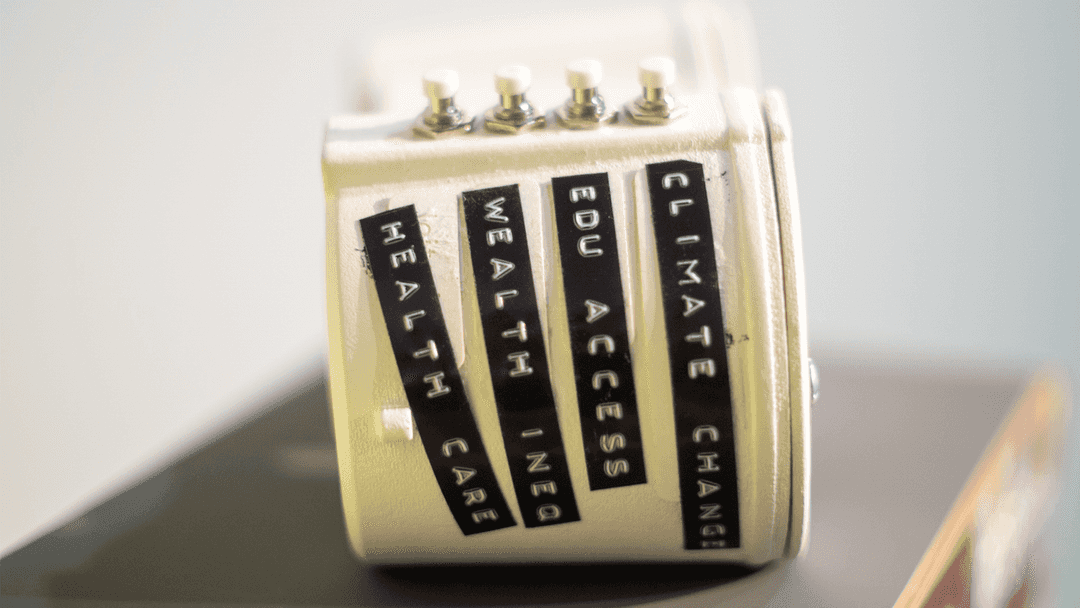

The system would pull data from neighborhood social media apps like NextDoor to create labels that end up being codewords for xenophobia and racism like “suspicious activity” and “loitering.”

What would happen if the categories were relabeled to reflect structural and systemic causes rather than the symptoms of those issues? Could the infrastructure be reappropriated to identify McMansions or other symbols of inequity, rather than automate oppression and prejudice?

Scenario 2: Municipal Data Collection

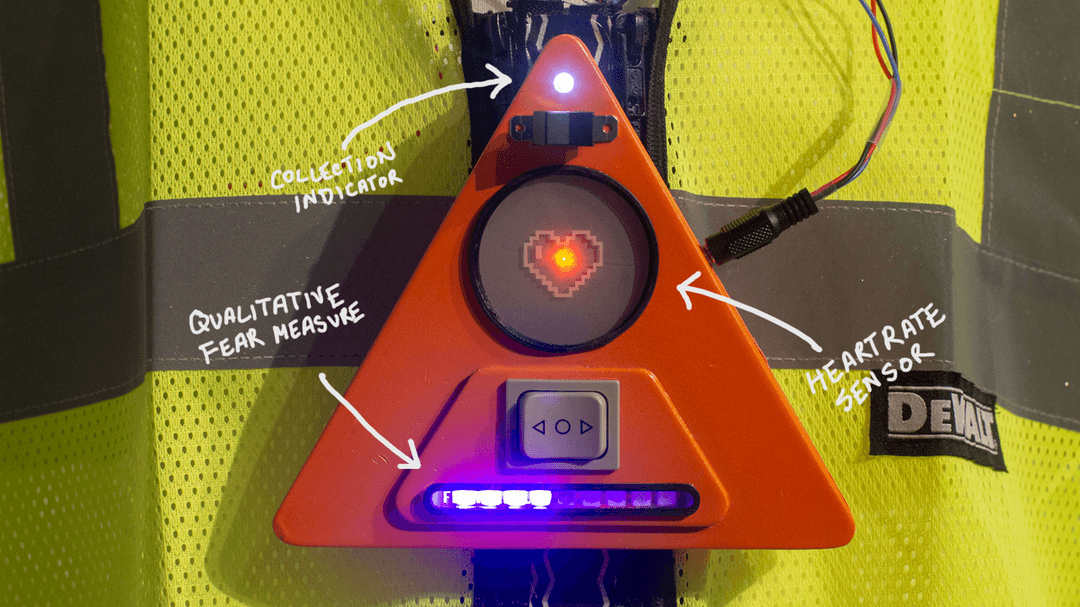

The second scenario imagines a local, smart city initiative where residents become data surveyors to creat a dataset mapping residents’ sense of fear, safety, and security within the city in an effort to determine where city funds should be spent.

This scenario is meant to facilitate conversations around how smart city tech initatives typically sample only the most well-off, property owning residents. It also asks why the main solution we see proposed to solve this “sampling bias” is to put already vulnerable people of color under further surveillance.