Brief: If machine learning could be interpreted as a form of worldbuilding, how could you use it to generate imaginary worlds?

(For more details on how this brief emerged as a part of my thesis work, see the process case study)

Initial Concept

The initial concept uses google street view as a data souce, letting users create imaginary data.

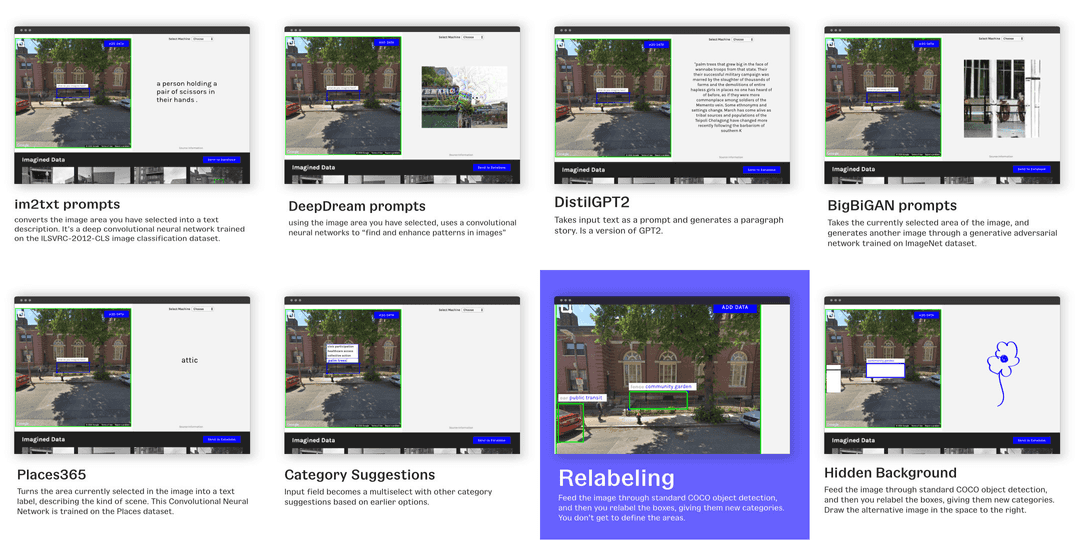

The initial prototype highlighted the main challenge: providing users prompts to help them create label imaginary data. The following variations explored different ways to help users label and create this fictional data.

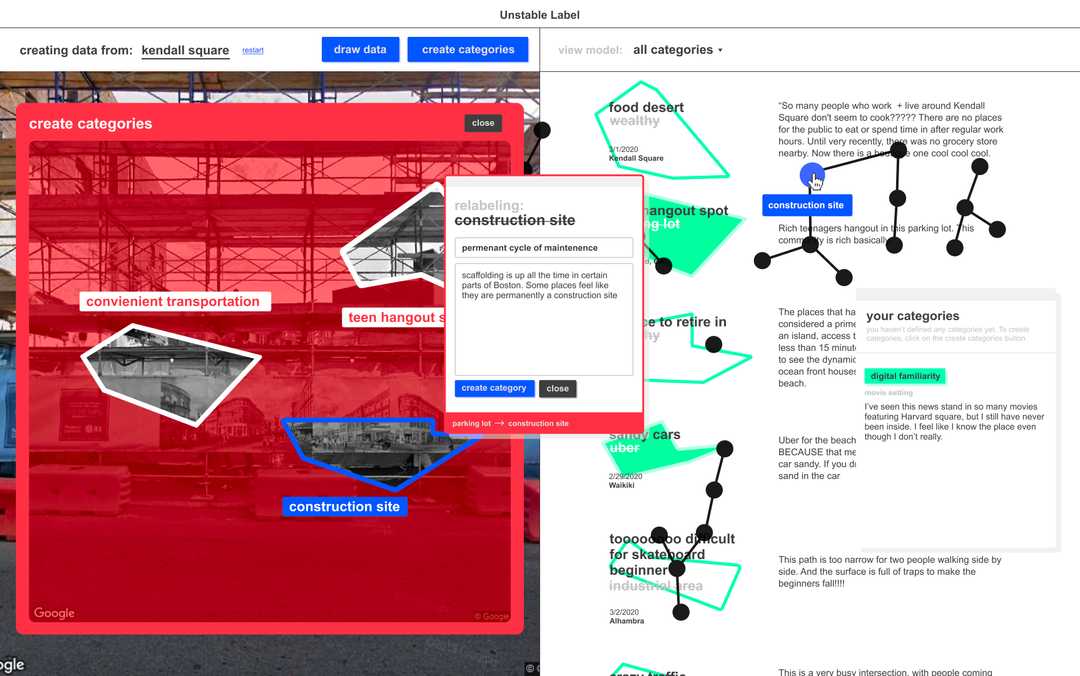

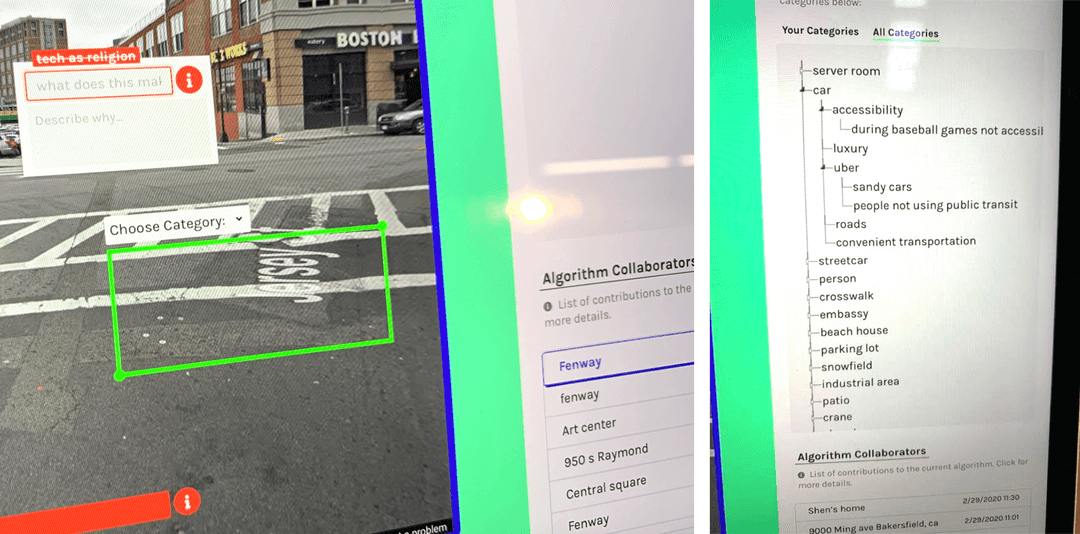

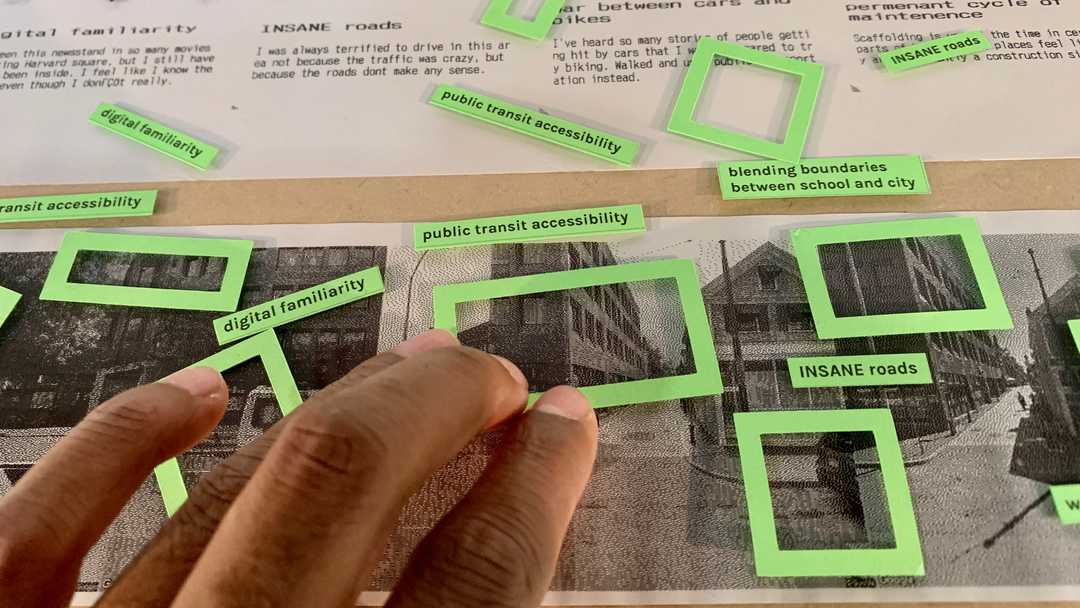

Through presentations, critiques and discussions, the most promising idea was some sort of relabeling systems, where users relabel existing labels as a way to create new categories

Developing the Concept

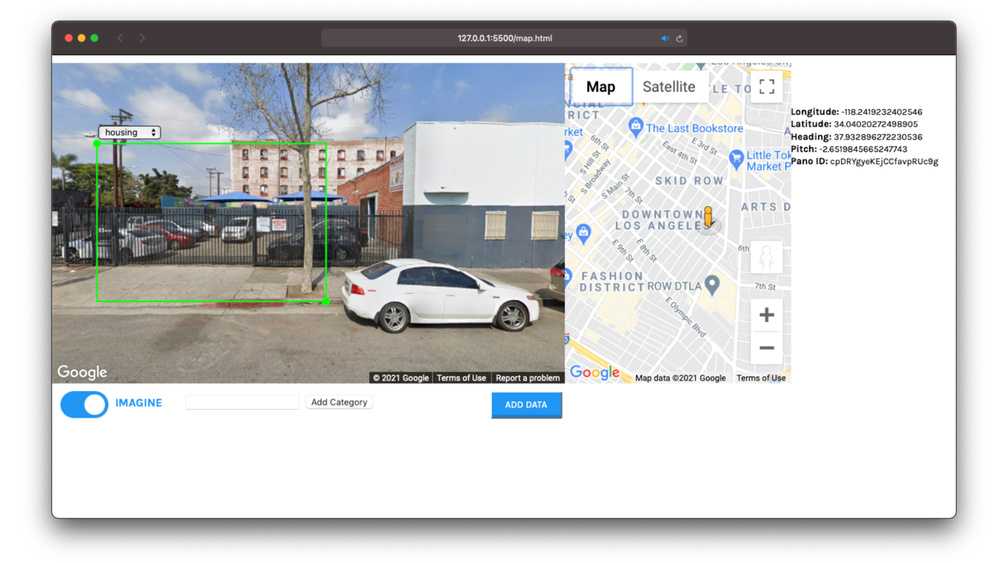

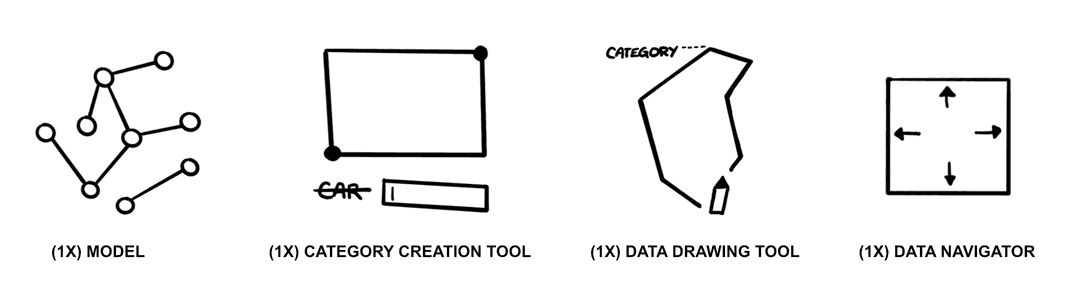

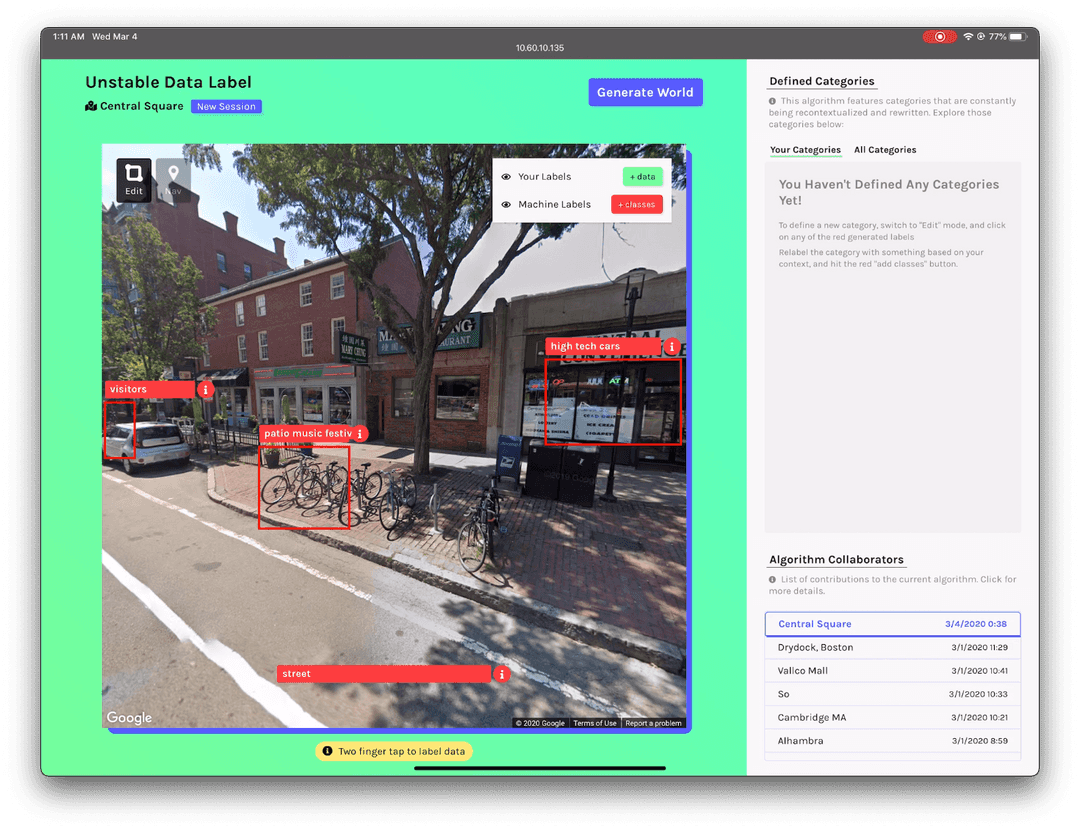

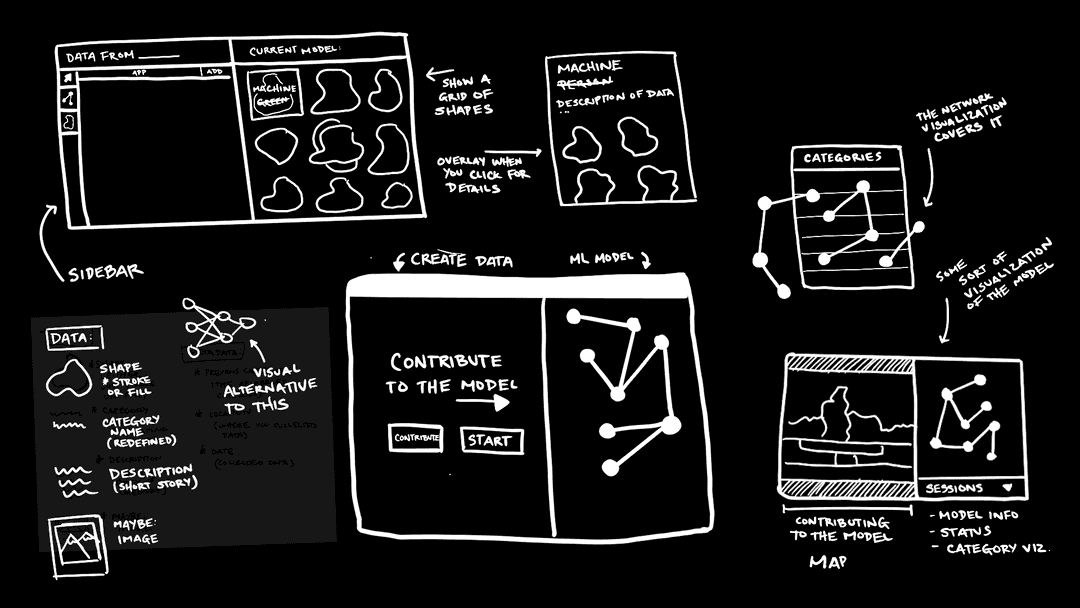

To develop the concept further, I designed and built a functional web-app to run on an iPad. Through the process of designing and building this app, there were four key components that emerged.

One of the key features that was added to this prototype was a way to see a branching tree that showed the relationships between the different categories that were being created.

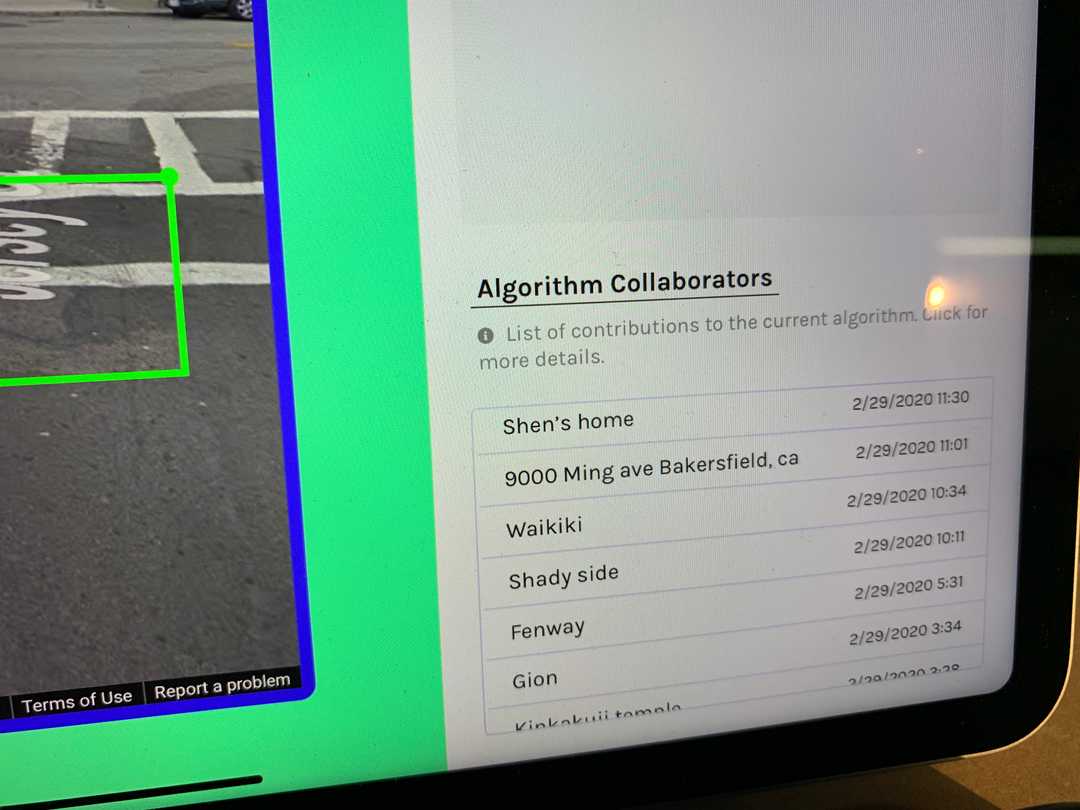

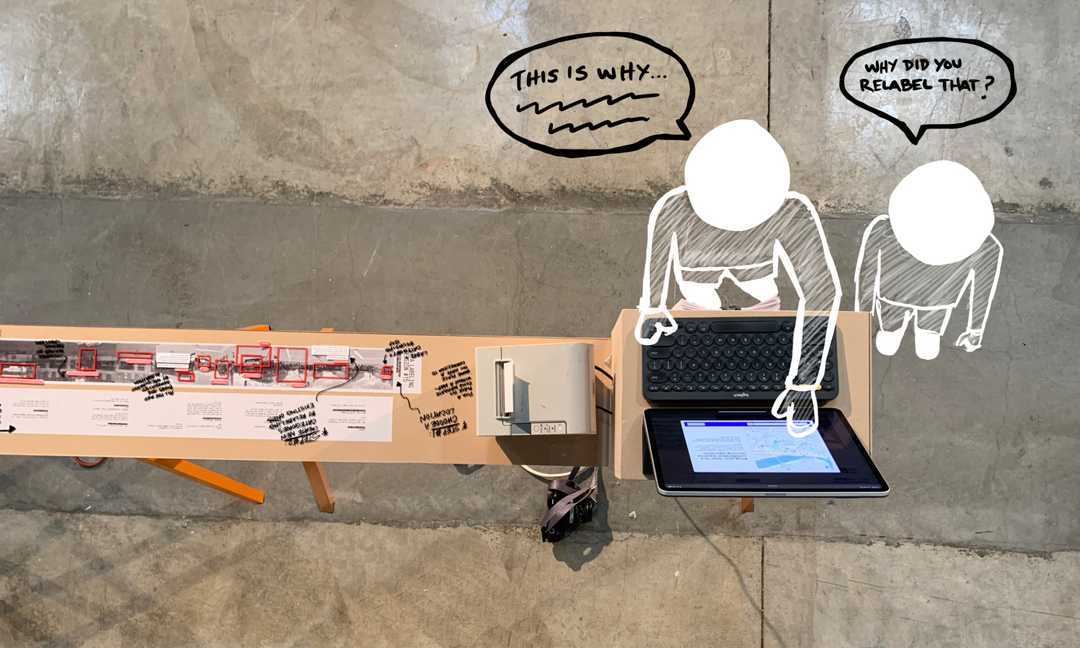

'This version of the app was used in a gallery show, in combination with a "workshop" style deconstruction of the relabeling features of the app. The gallery show and presentation provided opportunities for feedback:

- Understanding how legible the concept was to users

- Finding which parts of the process were the most difficult to complete

- Identifying opportunities for new features

Refining the UI Design

The next round of iterations focused more on the UI design, figuring out how the visual aesthetics of the app could better represent the core concepts.

Some key additions were:

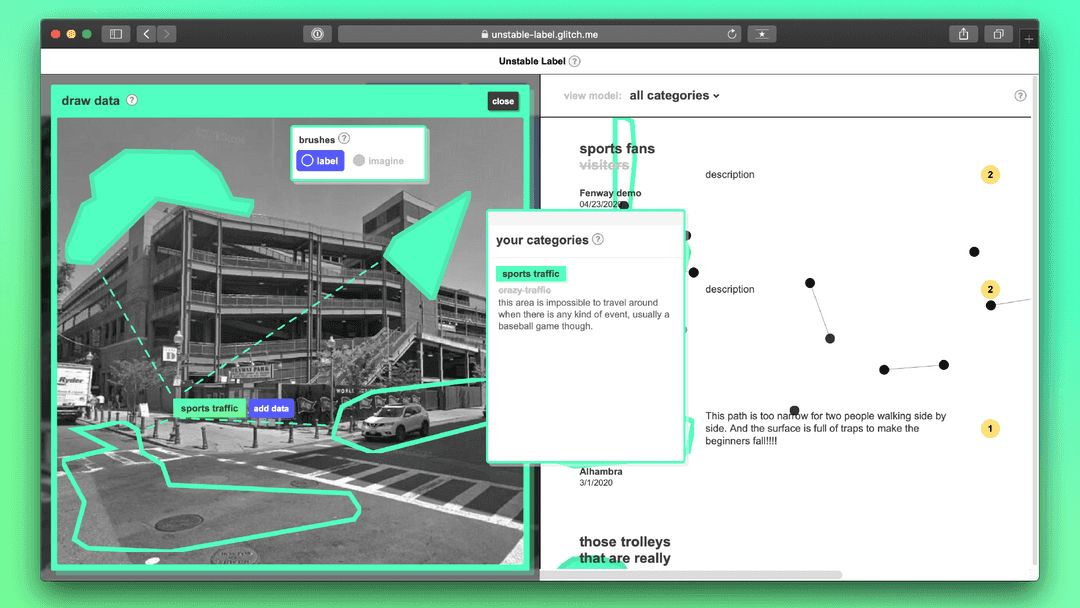

- Letting users draw-free form shapes to create bounding boxes

- Representing the model as a network diagram rather than a hierarchitcal tree

- Introducing a UI with lots of overlapping elements, as a way to visually represent the layered complexity that is often hidden in "simple" labeling tasks.

Final Design

For a more detailed look at the final design, and a more in-depth look at the system, visit the user manual.

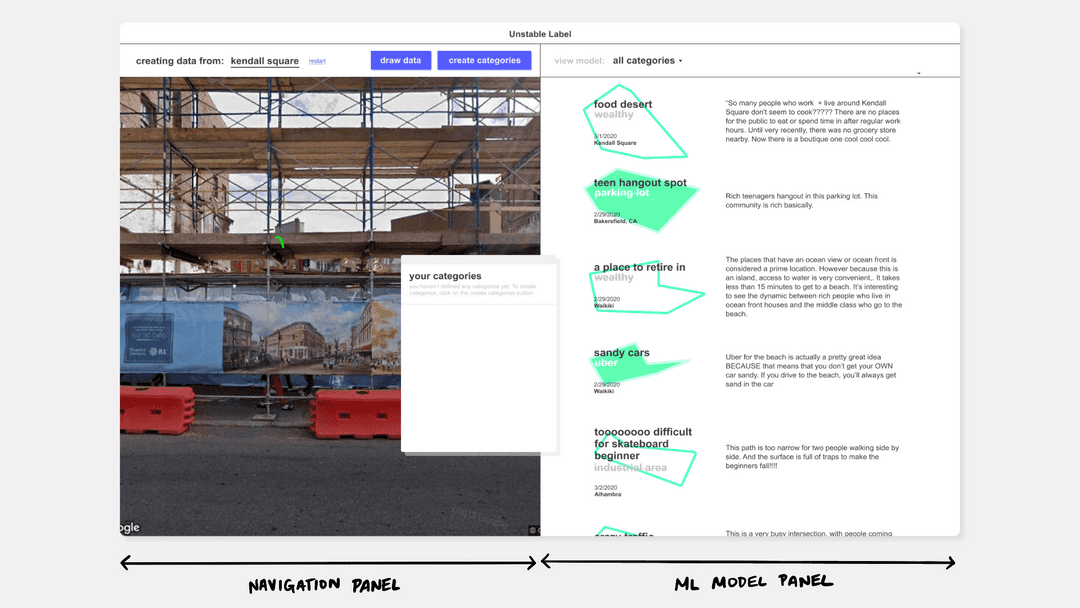

System Overview

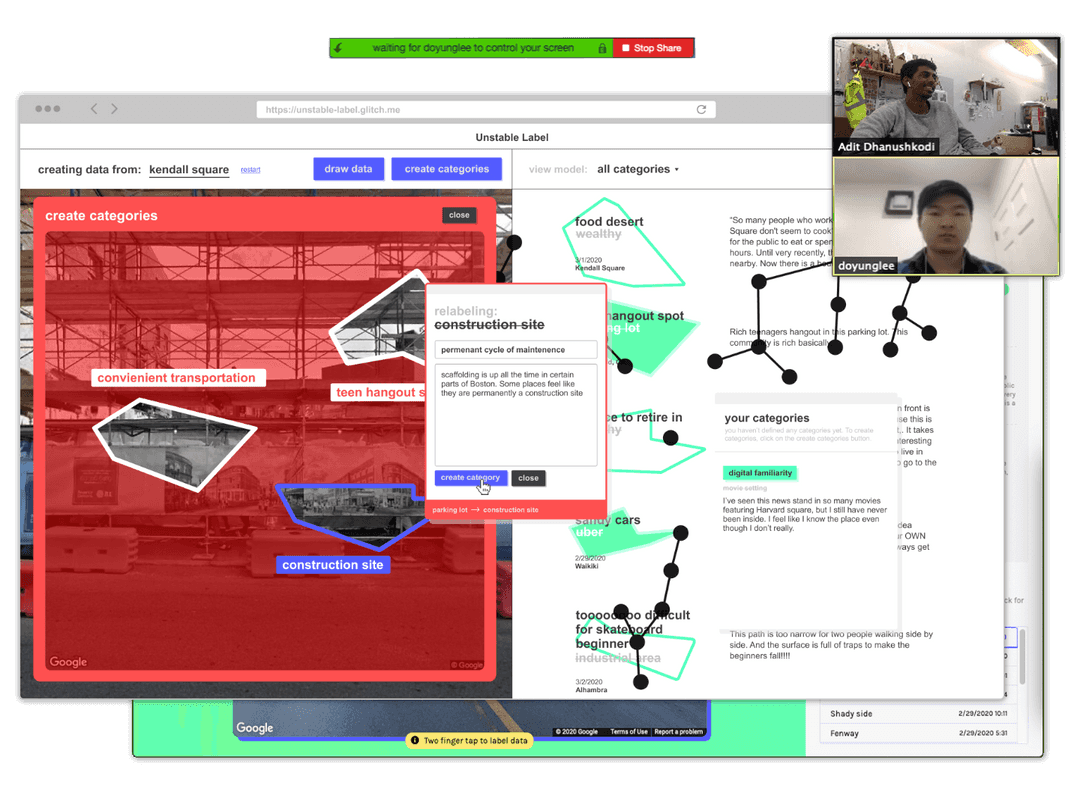

The left panel of the system allows you to navigate through google street view, finding sites of interest to contribute to the collective machine learning model.

The right panel represents the model, listing all the "data" that has been contributed to the project, featuring not only labels, but also the stories that put them into context.

Step 1: Local Navigation

Each user contributes from their own local context from within Google Street View or from a physical data collection device.

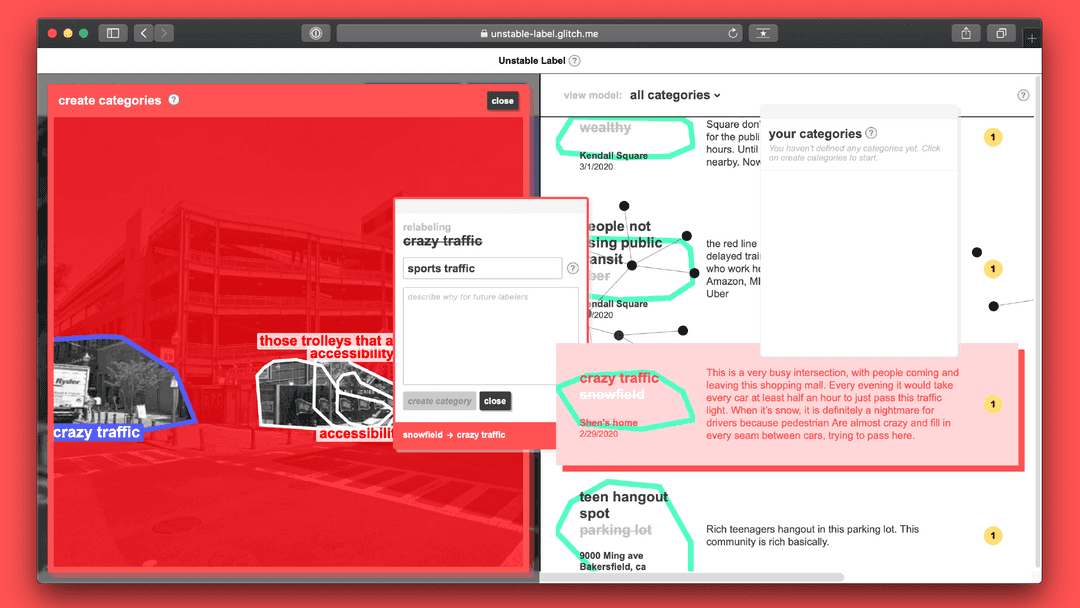

Step 2: Creating Categories

- The current machine learning model evaluates the image from your current location, generating labels.

- You create new categories by relabeling those existing categories. The relabeling process is usually done in a small group. You discuss what the original category makes you think of within your local context.

This is not about "correcting" the algorithm or making it more "accurate." It's about introducing your locally situated perspective into the dataset

Step 3: Drawing Data

Use your personal categories that you’ve just created to annotate other images, creating data. There are two annotation options: label and imagine. You can choose to annotate the image as it currently exists, or as you wish it existed.

Step 4: Updating the ML Model

Once submitted, the model is retrained using your data, adding your categories into the model. Future contributors may relabel your data, recontexualizing it to their local neighborhoods.